Introduction

In the early days of large language models (LLMs), crafting the perfect prompt was everything. Then came “context engineering”—feeding models extra documents, database dumps, and system messages to keep them on track. While these approaches powered demos and small proofs of concept, they buckle under real-world complexity. Underlabs Inc’s Apposphere flips the script: we automate every piece of context, instruction, and validation in code, so your AI workflows scale, adapt, and remain auditable without endless manual edits.

Why Prompt & Context Engineering Fall Short

Prompt engineering relies on “magic words” that break whenever the model updates or your wording shifts. Maintaining dozens of prompt variants becomes a full-time job, and no amount of tweaking forces true understanding in complex business logic.

Context engineering adds retrieval-augmented generation (RAG), JSON templates, and system messages to supply background. It helps accuracy, but creates a mounting pile of manual curation: engineers scramble to collect, summarize, and update context snippets each time data models evolve. Large context windows slow performance, and tracing a hallucination back to its source can feel impossible.

Automated Workflow Architecture: A Scalable Alternative

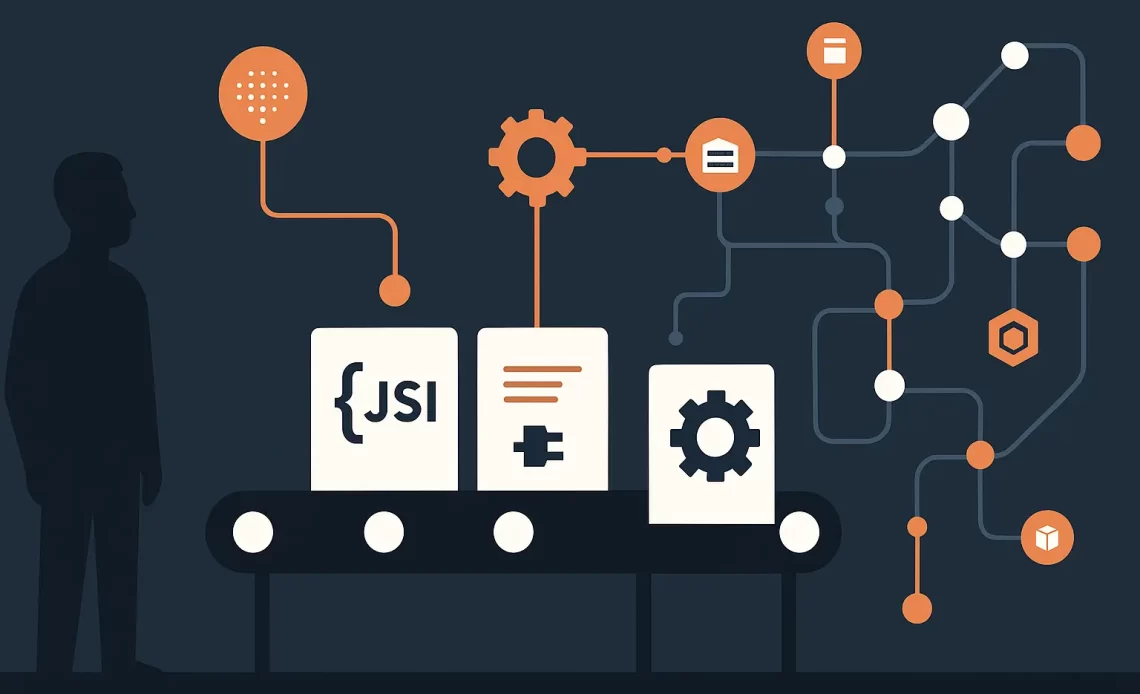

Underlabs Inc’s Apposphere treats LLM systems like modern manufacturing lines. Instead of hand-crafting prompts or assembling context by hand, we:

- Introspect your live database schemas to auto-generate JSON contexts

- Decompose tasks into narrow, atomic steps with clear inputs and outputs

- Orchestrate each step in code, feeding the model only what it needs

- Validate every response against schema rules and business logic

- Monitor performance and data lineage end to end

Core Components

Schema-Driven Context

We use tooling like Sequelize-auto or SQLAcodegen to extract your entities, fields, and relationships. These scripts output up-to-date JSON Schema definitions that become the single source of truth for every workflow step.

Atomic Step Pipelines

Workflows are broken into focused operations—“Extract Parties,” “Identify Obligations,” “Summarize Deadlines,” and so on. By limiting each AI call to one clear task, you reduce ambiguity, avoid token waste, and make debugging straightforward.

Orchestration Engine

Our lightweight runner:

- Fetches context from an internal API (e.g.,

/context/extract-parties) - Renders the prompt using a template and that context

- Calls the LLM

- Validates the output against JSON Schema

- Streams results to the next step

Declarative workflow definitions (YAML or JSON) let you update pipelines without rewriting code, and avoid costly token-burn tests.

Validation & Observability

Every AI response is run through a JSON Schema validator (e.g., Ajv or Python’s jsonschema). Failures trigger retries or human-in-the-loop alerts. Combined with OpenTelemetry traces and Grafana dashboards, you gain full visibility: from raw user input, through each step, to the final output, complete with latency metrics and error rates.

Key Benefits

- Automatic Scalability: Context adapts as your data model changes—no manual prompt edits.

- Reliable Maintenance: Debug at the step level, not in cryptic prompt wording.

- Stronger Compliance: Every response is validated and fully traceable for audits.

- Faster Time-to-Market: Engineers focus on new features, not prompt upkeep.

Real-World Example: Contract Review Pipeline

- Extract Parties

Context: JSON Schema for “Contract” entity.

LLM Task: Return an array of{ partyName, role, contact }.

Validation: Ensure every object has required fields. - Identify Obligations

Context: Schema for “Obligation.”

LLM Task: Extract obligations as{ description, dueDate }.

Validation: Check formats and keys. - Summarize Deadlines

Code-only step: Format due dates into human-readable bullet points. - Compliance Check

Rule Engine: Flag missing clauses (e.g., confidentiality, indemnity).

Add new fields—like indemnity clauses—and your schema introspection automatically includes them. No one ever hand-edits a prompt again.

Getting Started with Underlabs Inc Apposphere

- Discovery Workshop: We assess your data model, AI use cases, and compliance needs.

- Pilot Deployment: Launch a proof-of-concept pipeline (e.g., contract review) in 2–4 weeks.

- Scale & Optimize: Expand across teams, integrate dashboards, and automate schema updates.

Ready to transform your AI initiatives? Contact Underlabs today to schedule a demo and see how automated workflow architecture can future-proof your operations.